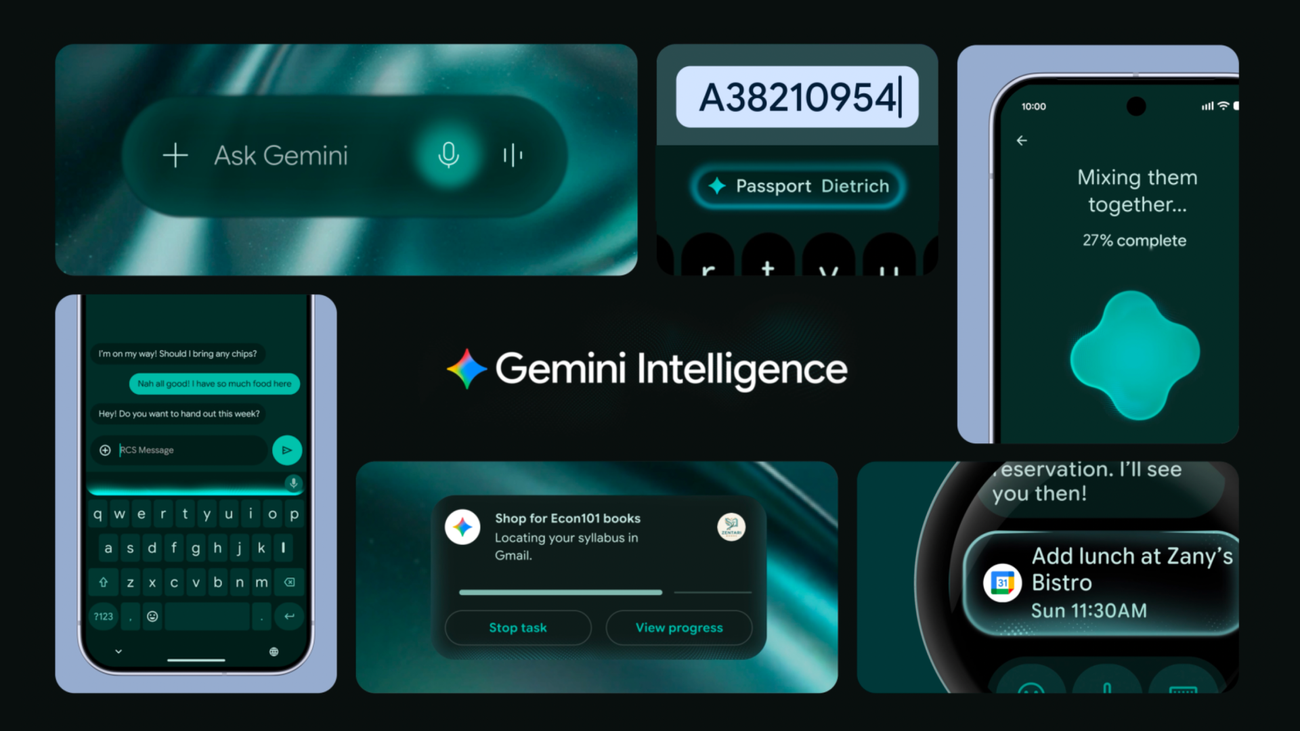

Google Turns Android Into an Intelligence System With Gemini

At the Android Show 2026, Google unveiled Gemini Intelligence — an agentic AI layer that automates tasks across apps, introduces natural-language widget building, and reshapes how Android developers need to think about their apps.

Image by Google

Google Turns Android Into an Intelligence System — What Developers Need to Know[#google-turns-android-into-an-intelligence-system]

Google dropped a major Android AI announcement on May 12 at the Android Show: I/O Edition, repositioning Android not as a mobile operating system but as what the company is calling an "intelligence system." For developers, this is the opening act ahead of Google I/O next week — but the implications for apps, APIs, and the Android platform are already concrete.

The centerpiece is Gemini Intelligence, an AI layer embedded directly at the Android OS level that can automate multi-step tasks across apps on behalf of users, fill out complex forms using personal context, and let users build their own custom widgets through natural language. "We're transitioning from an operating system to an intelligence system," Sameer Samat, VP of Android, told CNBC.

App Automation: A New Interaction Layer for Android Apps[#app-automation]

Gemini Intelligence can now navigate multi-step workflows inside Android apps without users manually switching between them. Google demonstrated scenarios like booking a front-row spin class, finding a course syllabus in Gmail and then adding required textbooks to a shopping cart, or building a grocery delivery cart from a photo of a handwritten list. Gemini works in the background, with progress tracked via notifications, and requires explicit user confirmation before completing any transaction.

Google's Android Developers Blog published a separate developer-focused cut alongside the consumer announcement, noting that Gemini Intelligence "creates another avenue for user engagement, driving high-intent traffic to your app without requiring code or major engineering work from you." Apps must be eligible for Gemini to interact with them, which means developers will need to review how their apps handle the new automation model ahead of the Android 17 era.

Create My Widget: Vibe Coding Comes to the Home Screen[#create-my-widget]

The most directly coding-relevant feature is Create My Widget, which lets users describe a widget in natural language and have Gemini build it on the spot. A user can ask for "a weather widget that shows only wind speed and rain," or "three high-protein meal prep recipes every week," and get a functional, adaptive widget added directly to their home screen. The feature is powered by RemoteCompose and also works on Wear OS watches.

This is vibe coding brought to the Android widget layer. For developers, it signals that Google is comfortable letting users generate functional UI through natural language — a capability that mirrors what tools like Lovable and v0 offer for web developers, now landing at the OS level.

Gemini in Chrome and Intelligent Autofill[#gemini-in-chrome]

Starting in late June, Gemini in Chrome for Android will support auto browse, a feature that handles task automation directly from the browser, including booking appointments and reserving parking spots. Autofill is being rebuilt with Gemini Personal Intelligence, enabling automatic completion of complex forms by pulling relevant data from connected apps. Both features are opt-in and can be disabled in settings at any time.

New Hardware Surface: Googlebook[#googlebook]

Google also previewed Googlebook, a new category of premium Android-powered laptops from Acer, ASUS, Dell, HP, and Lenovo arriving this fall. First models ship with Gemini baked in, full Android app compatibility, and a Magic Pointer that summons Gemini by wiggling the cursor. For developers, Googlebook is another adaptive UI target on top of an Android ecosystem that already spans phones, tablets, foldables, cars, and watches.

What's Unconfirmed[#whats-unconfirmed]

Gemini Intelligence features will start rolling out this summer on Samsung Galaxy S26 and Google Pixel 10, with watches, cars, glasses, and laptops following later in 2026. Google has not yet published developer documentation for how third-party apps opt into deeper Gemini Intelligence integration beyond passively being automatable.

Google I/O runs May 19–20, where Gemini 4 is expected alongside the full developer API story for the new platform. For Android developers, that is the session to watch.