OpenAI Ships Symphony: Codex Agents Now Run Your Linear Board

OpenAI open-sourced Symphony today — an orchestration spec that maps every open Linear issue to a dedicated Codex agent workspace, removing the per-session supervision bottleneck. Some internal teams reported 500% more landed pull requests in three weeks.

Image source: Open Graph image

OpenAI Ships Symphony: Open-Source Codex Orchestration That Turns Linear Into an Agent Control Plane

OpenAI released Symphony today — an open-source orchestration specification that eliminates the per-session supervision bottleneck that has limited developer throughput with coding agents. Instead of manually managing parallel Codex sessions across browser tabs and terminals, Symphony maps every open Linear ticket to a dedicated agent workspace and keeps agents running until the work is done.

The reference implementation, built in Elixir and licensed under Apache 2.0, is available on GitHub. The specification itself is language-agnostic — Codex implemented it in TypeScript, Go, Rust, Java, and Python during OpenAI's internal iteration.

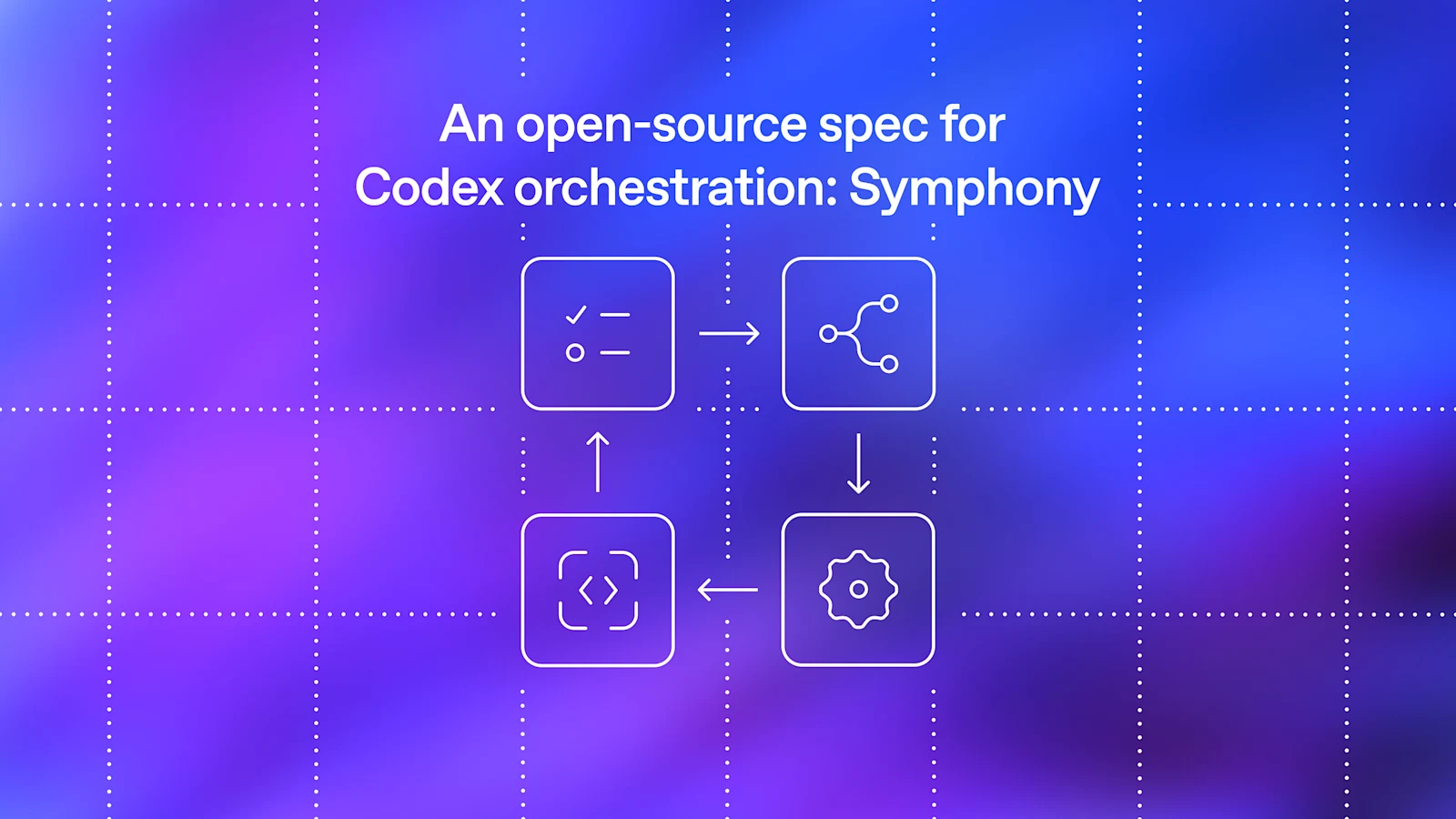

How It Works

Symphony is a state machine, not an IDE plugin or a new product surface. It continuously polls a Linear board, assigns a Codex agent to each open issue in an isolated workspace, and manages the full lifecycle: if an agent crashes or stalls, Symphony restarts it; when new tickets appear, it picks them up immediately.

The key design choice is making the issue tracker — not the terminal or the IDE — the control plane. Ticket statuses drive agent behavior. Some issues produce multiple pull requests across repositories; others are pure investigation that never touches the codebase. Symphony handles both without requiring engineers to supervise individual agent sessions.

The system runs on devboxes and operates around the clock. One OpenAI engineer made three significant code changes from the Linear mobile app while staying in a cabin with weak Wi-Fi, according to the company. The agents handled the rest.

Output and Adoption Signal

OpenAI reported a 500% increase in landed pull requests among some internal teams in the first three weeks of Symphony deployment. Linear founder Karri Saarinen corroborated the momentum, highlighting a spike in new workspaces created on the platform as Symphony was released.

The deeper shift is cognitive rather than mechanical. When engineers stop supervising individual Codex sessions, the perceived cost of each code change drops. Fixes that previously weren't worth the human overhead become viable. Teams move from managing coding agents to managing work.

Security Architecture

Symphony is built on the Codex App Server — the programmatic JSON-RPC interface for Codex. To prevent OAuth token exposure to subagents, it uses a dynamic linear_graphql tool call that executes requests against Linear directly from the orchestrator, keeping the access token out of agent containers.

Approval policies are configurable. Teams can set full auto-approve for trusted codebases or require explicit human sign-off for sensitive operations. An optional Phoenix LiveView dashboard provides real-time visibility across all agent workspaces and issue states.

What's Unconfirmed

OpenAI explicitly frames Symphony as a "low-key engineering preview for trusted environments." The 500% PR uplift figure is drawn from internal teams running on codebases already optimized for agentic operation — agent-friendly repository structures, extensive automated tests, and guardrails built in advance. No independent validation exists.

Analysts have flagged the scaling asymmetry: generation scales easily; validation does not. Higher PR volume shifts the review burden to engineers rather than eliminating it. Greyhound Research chief analyst Sanchit Vir Gogia described Symphony as beginning to resemble "a lightweight operating system for software delivery" — with the governance, security, and auditability questions that implies for enterprise adoption.

Symphony currently only officially supports Linear and OpenAI models. The company does not plan to maintain it as a standalone product; the repository is a reference implementation for teams to study, fork, and extend.